291 KiB

- On Finding a To-Do Setup That Works

- Publishing my Emacs Configuration using Gitea Actions

- Quitting 100 Days To Offload

- hledger for personal finances: two months in

- My Emacs Package of the Week: CRUX

- Using stow for managing my dotfiles

- Small changes to my website design

- Another Update on Publishing my Emacs Configuration

- Mirroring my Gitea Repos with Git Hooks, again

- Why I failed using Org-mode for tasks

- Using Emacs tab-bar-mode

- Publishing my Website using GitLab CI Pipelines

- My Emacs package of the week: org-appear

- Update on Publishing my Emacs Configuration

- Publishing My Emacs Configuration

- Update on my Org-roam web viewer

- RSS aggregators and a hard decision

- My Emacs package of the week: orgit

- New Project: Accessing my Org-roam notes everywhere

- Improving my new blog post creation

- How this post is brought to you…

- 100 Days To Offload

- Updates to my website

- Automatic UUID creation in some Org-mode files

- „Mirroring“ my open-source Git repos to my Gitea instance

- Switching my Website to Hugo using ox-hugo

- Quick Deploy Solution

- Updated: Linux Programs I Use

- Firefox tab bar on mouse over

- Scrolling doesn't work in GTK+ 3 apps in StumpWM

- Disabling comments

- Moving the open-source stuff from phab.mmmk2410 to GitLab

- Cavallino-Treporti (IT) Bicycle Tour 1

- Netzwerkseminar

- Der Drucker

- Rangitaki Version 1.5.0

- Quote by Wang Li

- Rangitaki Version 1.4.4

- Morse Converter Web App 0.3

- Rangitaki Version 1.4.3

- Rangitaki Version 1.4

- How to run a web app on your desktop

- Rangitaki Version 1.3

- Programs I use

- Music recording "The Ending Year"

- Musikstück "The Ending Year"

- Rangitaki Version 1.2

- Rangitaki Version 1.1.90 Beta Release

- Rangitaki Version 1.1.2 Development Release

- Scorelib

- In the lab

- Winter is coming…

- Rangitaki Version 1.1.0 Development Release

- New piece coming soon

- Rangitaki Version 1.0

- Morse Converter Android 2.4.0

- Morse Converter Desktop Version 2.0.0

- Landesverrat

- Artikel vom 15.04.2015

- Konzept zur Einrichtung einer Referatsgruppe 3C „Erweiterte Fachunterstützung Internet“ im BfV

- Hintergründe, Aufgaben und geplanter Aufbau der EFI

- Referat 3C1: „Grundsatz, Strategie, Recht“

- Referate 3C2 und 3C3: „Inhaltliche/technische Auswertung von G-10-Internetinformationen“

- Referate 3C4 und 3C5: „Zentrale Datenanalysestelle“

- Referat 3C6: „Informationstechnische Operativmaßnahmen, IT-forensische Analysemethoden“

- Personalplan der Referatsgruppe 3C „Erweiterte Fachunterstützung Internet“ im BfV

- Referatsgruppe 3C: Erweiterte Fachunterstützung Internet

- Referat 3C1: Grundsatz, Strategie, Recht

- 3C1: Querschnittstätigkeiten

- 3C1: Serviceaufgaben

- 3C1: Bearbeitung von Grundsatz-, Strategie- und Rechtsfragen EFI

- 3C1: Zentrale Koordination der technisch-methodischen Fortentwicklung, Innovationssteuerung

- 3C1: Bedarfsabstimmungen mit den Fachabteilungen

- 3C1: Zusammenarbeit mit weiteren Behörden

- Referat 3C2: Inhaltliche/technische Auswertung von G-10-Internetinformationen (Köln)

- 3C2: Technische Auswertung von G-10-Internetdaten

- Referat 3C3: Inhaltliche/technische Auswertung von G-10-Internetinformationen (Berlin)

- 3C3: Technische Auswertung von G-10-Internetdaten

- Referat 3C4: Zentrale Datenanalysestelle (Köln)

- 3C4: Analyse von Datenmengen (methodischen Fortentwicklung, Evaluierung von neuen IT-Verfahren zur Datenanalyse, Abstimmung mit Kooperationspartner in diesen Angelegenheiten)

- 3C4: Technische Unterstützung

- Referat 3C5: Zentrale Datenanalysestelle (Berlin)

- 3C5: Analyse von Datenmengen (methodische Fortentwicklung, Evaluierung von neuen IT-Verfahren zur Datenanalyse, Abstimmung mit Kooperationspartner in diesen Angelegenheiten)

- 3C5: Technische Unterstützung

- Referat 3C6: Informationstechnische Operativmaßnahmen, IT-forensische Analysemethoden

- 3C6: Unkonventionelle TKÜ

- Konzept zur Einrichtung einer Referatsgruppe 3C „Erweiterte Fachunterstützung Internet“ im BfV

- Artikel vom 25. Februar 2015

- Artikel vom 15.04.2015

- Morse Converter Desktop Public Beta 1.9.3

- Rangitaki Version 0.9: Release Condidate for 1.0

- Rangitaki Version 0.8

- Rangitaki Version 0.7 - The alpha release

- A new design for marcel-kapfer.de

- Rangitak version shedule until 1.0

- Rangitaki Version 0.5 and Material Design

- Morse Converter Android App Version 2.2.7

- Morse Converter Android App Beta testing

- Rangitaki Version 0.2.2

- From pBlog to Rangitaki

- Abitur und Weisheitszaehne

- Web App Alpha Release

- pBlog Version 2.1

- About the Future of pBlog

- pBlog Version 2.0

- Morse Converter Android Version 2.1

- Morse Converter Debian Package

- pBlog Version 1.2

- pBlog Version 1.1

- Week in Review

- pBlog Version 1.0

- Material Bildschirmhintergründe 1 und 2

- pBlog Version 0.3

- pBlog Version 0.2

- Morse Converter Android Version 2.0

- Material Wallpapers 1 and 2

- Morse Converter Desktop Version 1.1.1

- Morse Converter Desktop Version 1.1

- Blog (Experimental)

- The Ending Year published

- UPDATE: Bash script for LaTeX users

- UPDATE: Bash Skript für LaTeX Benutzer

- Bash script for LaTeX users

- Bash Skript für LaTeX Benutzer

- Morse Converter Android Version 1.0.1

- Morse Converter Desktop Version 1.0.2

- Morse Converter Desktop Version 1.0.1

- Comfortaa Font for Cyanogenmod Theme Engine

- Morse Converter sourcecode now on GitHub

- Comfortaa Font für Cyanogenmod Theme Chooser

- Morse Converter Android App Version 1.0

- Morse Code Konverter Android App Version 1.0

- Morse Code Converter Version 1.0.0

- Morse Converter Version 1.0

- Morse Converter Version 1.0.0

- Punktebilanz

- Morse Converter Version 0.2.2

- Morse Converter Version 0.2.1: First public release

- The writtenMorse website is online

- Morse Converter Version 0.2

- Morse Converter Version 0.1

- Installation of Debian 8 "jessie" testing

- Schöne ruhige Zeit

- 15. September 2013

- 02. August 2013

- 22. Juli 2013

- Meinungsfreiheit in Deutschland?

DONE On Finding a To-Do Setup That Works orgmode gtd tasks pim

CLOSED: [2023-05-22 Mon 17:49]

- State "DONE" from "TODO" [2023-05-22 Mon 17:49]

How many to-do apps have you already tried? All of them? Did you find one that "works" for you? No? Well, you're certainly not alone.

The Endless Search

I tried a fair share of apps and setups but all of them seemed to fail sooner or later. Whether it was a plain paper notebook I kept in my pockets, a custom Emacs Org-Mode setup or some apps like Nextcloud Tasks, Trello or Todoist. I discard each one of them after a while. And it took me some years to realize why and how to resolve this dilemma.

In search of managing my life a bit better and handling tasks more proficiently, I read and worked through David Allens' book "Getting Things Done (GTD)" starting in January 2022 and implemented his methodology in Todoist about a year ago. And only after some time I slowly realized that I didn't stop using the app. And I'm still following the GTD methodology as closely as possible even after switching back to Emacs Org-Mode in December 2022 for obvious privacy concerns.

A Different Problem

Perhaps the "problem" was not all the apps and setups out there, but myself! Don't get me wrong, there are certainly some applications that are just not good or don't provide the features I truly need. But that's beside the point. Successfully maintaining a to-do system is not determined by finding the right app that just magically works for you. No, it is a state of mind. Whether an app fits you or utterly fails comes only down to how you use it.

So, if you're in the same place as I was and cannot find an app that "functions reliably" even after trying almost everything out there and wasting countless hours searching for programs and migrating between different setups, then it may quite possibly be, that you should find a system that suits you first and then search for a solution (whether digital or on paper doesn't matter) that supports you the most for the system that works for you.

I'm not saying that GTD is necessarily the right methodology for you. It works for me but you very likely have different requirements and a different life than I and perhaps another system is better suited for you. Take some time to learn about the different ideas that are available and try them out. In the long run investing time in finding, learning and implementing a methodology that fits your life and your tasks and supports you is certainly worth it.

Finally, keep in mind that a system does not maintain itself! It is mandatory that you invest time regularly into maintaining the system and keeping it alive and running! If you don't do this then you have yet another system and app to put on your "doesn't work for me" list. If you established a system that works for you then every minute and every hour you spent keeping your system up is time saved.

DONE Publishing my Emacs Configuration using Gitea Actions @code emacs orgmode cicd pipelines gitea emacs

CLOSED: [2023-04-02 Sun 13:05]

- State "DONE" from "TODO" [2023-04-02 Sun 13:05]

About a year ago I already wrote a few blog posts about publishing my Emacs configuration, lastly using a GitLab pipeline. This worked quite fine since back then and I had zero problems or issues with the pipeline. Although I'm using the GitLab CI feature for this I don't use GitLab for hosting the repository. My dot-emacs-repository over there is just a mirror, the main one is in my personal Gitea instance.

So, a few days ago, Gitea 1.90.0 was released with an experimental feature called "Gitea Actions". This is a pipeline implementation like GitLab Pipelines or GitHub Actions. And since I didn't have anything better to do yesterday I decided to give this thing a try and publish my Emacs configuration using it.

The runner for Gitea Actions is an adjusted fork of nektos/act which is a tool for running GitHub Actions locally. This means that the Gitea Runner is largely compatible with the GitHub Actions Workflow format. If I understand it correctly, most GitHub Action definitions should "just" work without any adjustments.

I followed to Guide from the Gitea Blog for enabling the feature in the Gitea configuration and installing the Gitea Act runner. Afterwards, I started migrating the pipeline script from the GitLab CI format to the GitHub/Gitea format. Since I never used GitHub Actions before I run into a few problems and misunderstandings before I had a successful configuration of the runner (as it turned out: the defaults work just fine, but my adjustments didn't) as well as the workflow action configuration.

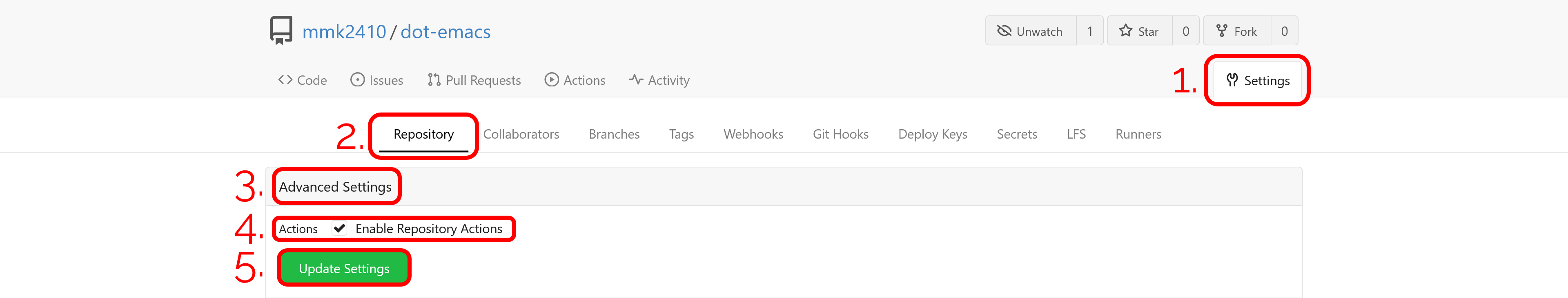

Given a successful runner installation and configuration, it is necessary to activate the Gitea Actions for the dot-emacs repository.

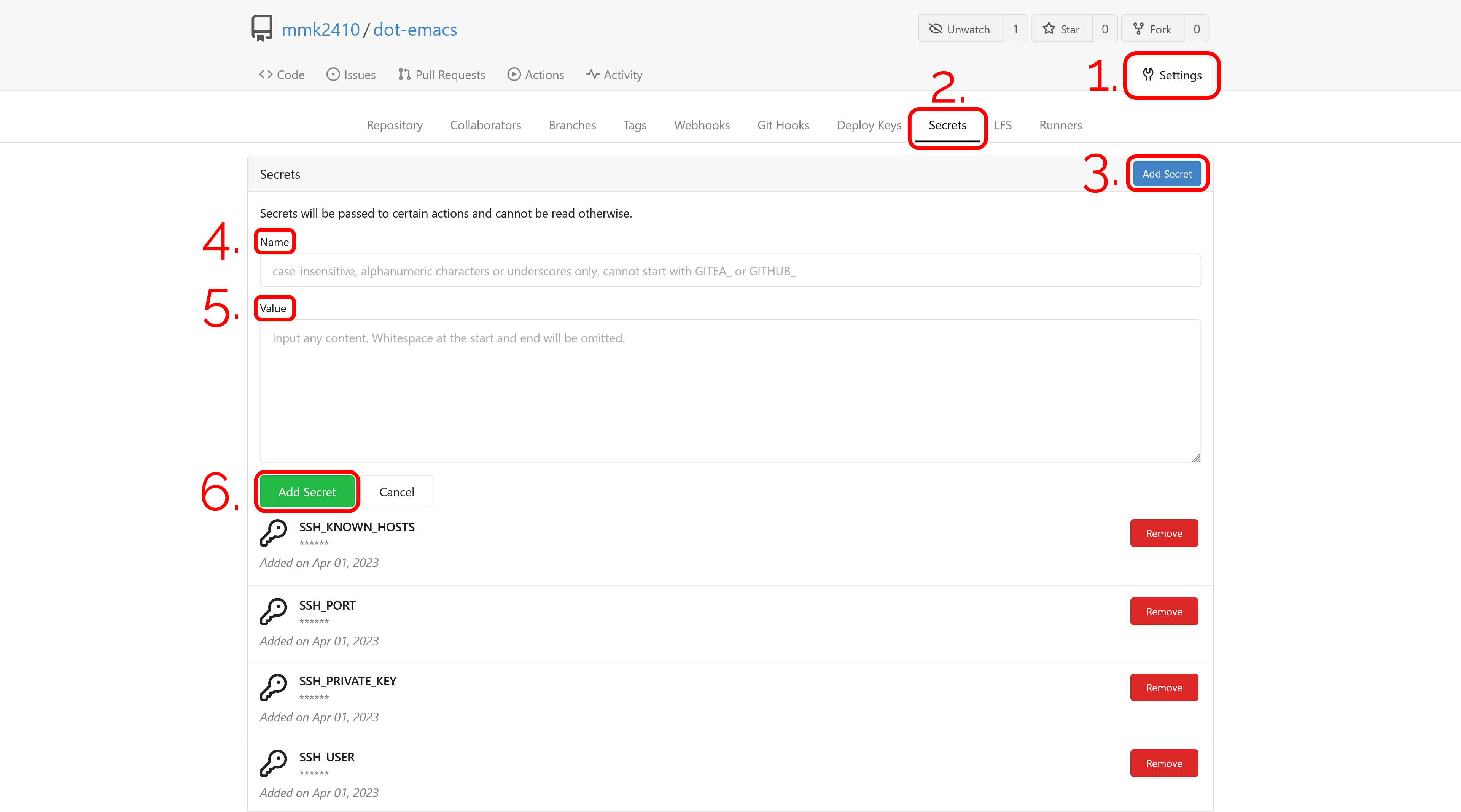

Then I needed to declare some secrets for the publish job to deploy the changes to my server using rsync. At the moment I keep using the gitlab-ci user I already created and configured it. So I copied the four secrets SSH_PRIVATE_KEY, SSH_KNOWN_HOSTS, SSH_PORT and SSH_USER from GitLab to Gitea. If you're following, along save the variables somewhere else (e.g. a password store) since contrary to GitLab you are not able to view or edit Gitea Secrets after saving them.

Now I can add and push my new Gitea workflow configuration, which I placed in the repository at .gitea/workflows/publish.yaml.

name: Publish

on:

push:

branches:

- main

jobs:

publish:

runs-on: ubuntu-latest

container: silex/emacs:28.1-alpine-ci

steps:

- name: Install packages

run: apk add --no-cache rsync nodejs

- name: Add SSH key

run: |

mkdir ~/.ssh

chmod 700 ~/.ssh

echo "$SSH_PRIVATE_KEY" | tr -d '\r' > ~/.ssh/id_ed25519

chmod 600 ~/.ssh/id_ed25519

echo "$SSH_KNOWN_HOSTS" | tr -d '\r' >> ~/.ssh/known_hosts

chmod 644 ~/.ssh/known_hosts

env:

SSH_PRIVATE_KEY: ${{secrets.SSH_PRIVATE_KEY}}

SSH_KNOWN_HOSTS: ${{secrets.SSH_KNOWN_HOSTS}}

- name: Check out

uses: actions/checkout@v3

- name: Build publish script

run: emacs -Q --script publish/publish.el

- name: Deploy build

run: |

rsync \

--archive \

--verbose \

--chown=gitlab-ci:www-data\

--delete\

--progress\

-e"ssh -p "$SSH_PORT""\

public/\

"$SSH_USER"@mmk2410.org:/var/www/config.mmk2410.org/

env:

SSH_USER: ${{secrets.SSH_USER}}

SSH_PORT: ${{secrets.SSH_PORT}}Essentially, not much changed compared to the GitLab CI version. As a base image, I decided to go with the silex/emacs using Emacs 28.1 on top of Alpine Linux. I additionally restricted the job to only run when pushed to the main branch. While I didn't work with any other branches until now, this is a possibility I'd like to keep open without destroying the website.

The rest of the workflow itself is still quite the same. First, we install necessary packages. We need rsync for uploading the resulting website to my server and nodejs for the actions/checkout@v3. Then I add the private key to the build job and this works a bit easier since a running ssh-agent is not needed (apparently for GitLab there was no way around this). After checking out the repository code I execute my publish.el Emacs Lisp script that generates a nice HTML page from my org-mode-based Emacs configuration. The last thing to do now just trigger the upload of the resulting files using rsync.

Although the Gitea Action file is more verbose and longer than its GitLab equivalent I prefer it slightly due to the option to name the individual build steps. This is something I come to enjoy quite a bit from writing and using Ansible playbooks.

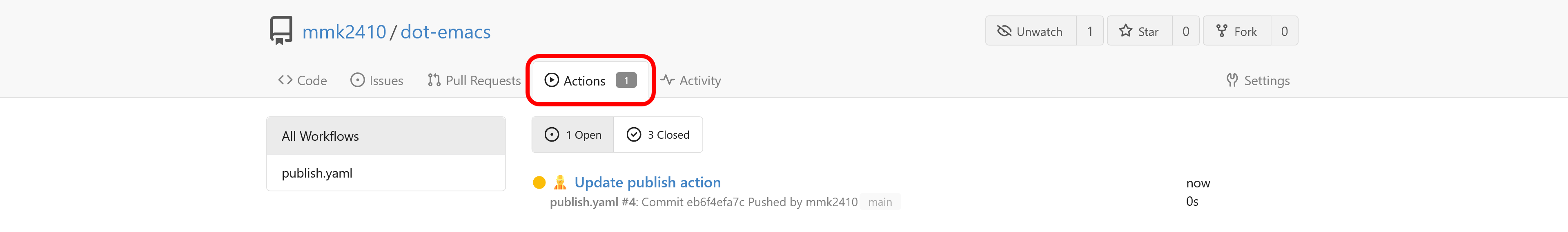

Since the configuration is done and tested in a private repository with a modified upload path I removed the .gitlab-ci.yml file and push the changes to the Gitea repository. We can now see the running pipeline in the "Actions" tab.

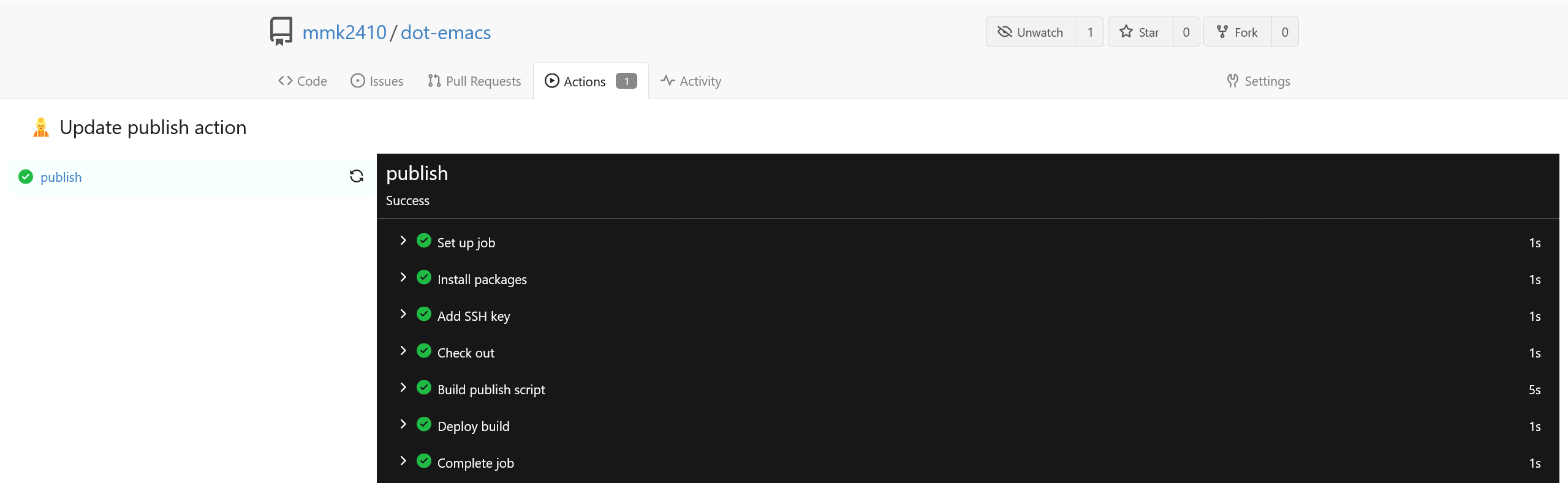

And with a click on the job title we can see the detailed execution and finally some nice green checkmarks.

Interestingly, the whole run takes only 11s on Gitea compared to about 33s on GitLab.com. I don't know if the reason for this is the platform itself or the restriction of the public runners on GitLab.com.

After running into a few problems initially due to my missing knowledge regarding GitHub Actions I enjoyed writing and optimizing the pipeline so well that I will not only keep this process but perhaps also migrate my other CI and CD jobs over.

If you want to see the resulting page, head over to config.mmk2410.org.

DONE Quitting 100 Days To Offload @100DaysToOffload

CLOSED: [2022-03-07 Mon 16:08]

- State "DONE" from "TODO" [2022-03-07 Mon 16:08]

I was thinking a long time about this step. To be precise, I had my first doubts back at the end of January, just two or three weeks after starting the project. Although, the reasons for considering quitting were a bit different back then than now.

When I decided to jump into the project on January 9th I had my doubts that I will be able to keep up the writing speed to produce 100 blog posts in one year. Therefore, I set myself a task to write a new post every three days and this worked surprisingly well. I only once missed the deadline last Friday. So, during the last two months, I wrote a total of 20 blog posts, which would mean that I would finish in about ten months total if nothing unexpected happens. And even if I needed to stop for some time I would have enough breathing room.

So, unreachability is and was not a reason for quitting. But what then? Well, at the end of January my doubts came from the output of my statistics dashboard. While I was writing posts that I thought had a certain quality the visitor numbers were not that high. One or two of my Emacs-related posts were added to the /r/planentemacs subreddit and I think one was even featured on Sacha Chua's famous weekly Emacs news. But besides that, there were no other popular sources which was quite de-motivating. Although, I have to admit that this is for the most part my fault since I only posted links to the blog post on my Fosstodon account but not on any other service: neither using my lurking Reddit account nor tweeting from my more-or-less dead Twitter profile.

However, as you can tell, I didn't stop back then but I decided to write at least 20 posts to feel the workflow and reactions instead of quitting too soon. But nothing changed since then and the twentieth post is now indeed my "resignation letter".

But what is the final reason now? Well, there is not one, but a total of three with different priorities.

The Outcome Problem

The outcome problem described above is still something that bugs me, even if it is not my primary reason. Except for the last few posts (I come to that later) I spend some hours each time to write them: about 2-3 hours without preparation (but this cannot be part of the calculation since I would have done these things anyway). That is a total of four to nine hours a week.

The "founder" of the 100 Day To Offload project, Kev Quirk writes on the project website:

“Someone will find it interesting.”

And I do agree with this statement. There are most probably even two or three people that found the posts I published during the last two months interesting. But is this worth the time I invested?

For answering this question I decided to look in my Plausible analytics dashboard and if I subtract the incoming traffic related to r/planetemacs and Sacha Chua's Weekly Emacs News then the numbers are not that high anymore and the picture looks even a little bit darker if I also consider the bounce rate and visit duration. To be honest, there are of course much more visitors on my blog than I ever had and I'm also aware that there are even more since some blockers even block self-hosted Plausible instances. But the question is not resolved: Is it worth investing that much time?

I don't think there is a clear answer. Of course, it is not possible to build a "highly popular" block in just two months, especially if the posts are only shared in one network. But it is also interesting, that there was nearly no engagement from the readers. I have to say, that I did expect more mails, boosts or messages on Fosstodon. Therefore, together with the other two reasons, I currently have my doubts that what I do at the moment is worth the time.

The Quality Problem

I wrote earlier that I put about 2-3 hours into writing a blog post (without preparation). Well, it is more likely that this can be seen as an average over the last two months. Especially during the last week or two, my motivation to write was nearly at 0 and I didn't invest that much time in writing the posts.

To put it in other words: the quality of my blog posts drastically decreased during the last two months. While I wouldn't say that my blog posts had a high quality at any point I think that during the first 1-1.5 months the readers of the articles could get some interesting or relevant information from most (if not all) posts. In my eyes, the outcome for the readers decreased continuously.

Regarding this, I stumbled upon two quite different opinion. On the one hand, Kev Quirk writes on the 100 Days To Offload website

“Posts don't need to be long-form, deep, meaningful, or even that well written.”

On the other hand Ru Singh writes in her post "An end to #100DaysToOffload"

“I want to start focusing a little on quality again.”

The key between these different statements is the personal goal of the own blog. If my blog would have the goal to offer a window into my life then the project would be easy since I would not expect the posts to have any meaning or be helpful for someone. But this is not what I want to achieve with this blog. Even though it was a bit different in the distant past since a few years I want to post articles that are:

- Either helpful for someone and this includes a certain depth of the information; an example is my post on mirroring my Gitea repos using Git hooks

- Or state a funded opinion which would also require more text than just some "btw. I use Arch" (btw. I don't); an example would be a post on why I don't use an ad blocker

But why did the quality of my post drop? It was not time, regarding this not much changed during the last two months (well, at least not directly but I'll discuss this in the next section). While I'm not entirely sure I think it's the same thing that also Ru experienced:

“And write when I want to, instead of feeling forced to do it every three days or once a week.”

But also the quality is not the number one reason why I decided to quit the project.

The Focus Problem

Well, probably not the focus you're thinking and it also is not a problem. I'm sorry, but I wanted to keep the headline style… :D

However, focus is the primary reason for quitting. If you're one of the few persons that found and read my What I Use page or you had some spare time and read my about page then you know that I have some interest besides coding, self-hosting or configuring my system or Emacs.

As I wrote on many of my social projects: I like doing creative things. And while I also see developing software at least in some parts as a creative discipline (naming variables! Joke aside: e.g. problem solving requires being creative) I mainly mean the following areas: music composition, graphic design and photography. I've been interested in these things for a long time but during the last nearly seven years, I had not much time for it. But since I started working in November I have more time and the interest to invest time into these areas is rising steadily.

The problem is just that I started many small "projects" (e.g. self-hosting, a few TYPO3 websites, an event, my unofficial IntelliJ Debian packages, playing around with Emacs, …) during the last few years that while small constantly require some amount of time. But my urge to do more creative things is now that large that I want to invest a much larger amount of time in it than I currently have available. This is the focus I want to switch: Away from coding, towards creative projects.

This necessarily means that I will need to stop some things to have the time available that I want to invest. Together with the two reasons mentioned earlier (outcome and quality) it was clear to me that I will stop the #100DaysToOffload project.

Conclusion and Answers for Unasked Questions

To summarize the last three sections: I quit the 100 Days to Offload project because the outcome for me is not what I wanted/hoped, the quality of the posts is decreasing (as well as the motivation) and mainly because I want to focus on other creative areas.

What does this mean to the blog? Will it die? Of course, I won't write any further posts that are part of #100DaysToOffload. But this does not mean that it will die. Writing is a creative discipline and I don't want to stop doing this entirely. There will be new blog posts: Maybe once a month, maybe once a quarter or maybe just once a year. But I won't write them so that I have something to publish. I will write them when I have a topic that I find is worthy of investing the time to write a meaningful and helpful blog post. It is also possible that I will extend the posts I've written during the last weeks so that these are also helpful for the readers.

What does this mean for my other projects? I don't know at this point. Some will maybe die while others will persist, but perhaps with some changes. For example, the themes for the few TYPO3 websites I maintain won't go anywhere because I need them personally. The unofficial IntelliJ IDEA Ubuntu PPA / Debian packages will meet some drastic changes. Until the summer (perhaps even earlier) I want to automate the packaging and deployment process completely so that I don't need to do anything. If this does not work or the automation fails at some later point I cannot promise that I will maintain them any longer. But if this happens I will inform the users in advance. Regarding the Emacs rabbit hole, I'm not sure. Due to some graphical applications only being available on Microsoft Windows and macOS I'm nowadays more often using Windows than Linux. Always starting Emacs through WSL is slow and cumbersome and therefore always demotivates me a bit. Therefore I'm currently not entirely sure if I will switch back to Org-mode for task management at some point and I'm currently also trying Nextcloud Notes with MarkText for notes. But I still need Emacs for work (what else should I use for coding?!) and this won't change anytime soon.

Why the hell did I spend time writing such a long blog post? After all, a single "I quite, want to do other things" would have been enough, wouldn't it? It probably would have but I felt that I needed to write this lengthy post. For me, it was a great way to sort my thoughts on this and also make my mind up regarding some parts. It may also be a good read for some people who are thinking about trying the #100DaysToOffload project to see the problems others had to deal with. If you're currently thinking about doing this and you are certain about the kind of content you want to produce and the time you have I absolutely encourage you to do it! Although it was only two months it still was a great experiment for me and all in all I had some fun with it! The best part was the conversations I had with some readers who really provided some extremely helpful advice. In some way, I think that even though I didn't reach the goal of 100 blog posts (the goal is so far off it is not even visible) the project was a success. Not regarding the #100DaysToOffload idea, but personal growth.

Will I try it again sometime in the future? This is something I don't want to rule out. It is indeed possible that I will again start a #100DaysToOffload journey but I won't be on this blog (or at least not while I have the same goals as I have now).

At last, I want to finish this post with some final thoughts for my readers.

- If you finished the #100DaysToOffload project yourself I have huge respect for you!

- If you're currently in the middle of it I wish you the best of luck and a ton of fun as well as many nice experiences with your readers!

- If you're thinking about starting or never even though about writing a blog I encourage you to do so! Even if you stop after just five or ten posts it is worth the experience in my opinion! And (although this comes from a quitter in this case) quitting something is not a bad thing! Quite the contrary: Not finishing things or dropping a project is something that everyone goes through, forcing yourself to finish something against your will is a fight against yourself that you cannot win and that is certainly not worth some kind of "DONE" label.

Day 20 (and also my last day) of the #100DaysToOffload challenge.

DONE hledger for personal finances: two months in @100DaysToOffload finance

CLOSED: [2022-03-05 Sat 07:35]

- State "DONE" from "TODO" [2022-03-05 Sat 07:35]

For years I wanted to use some kind of personal accounting system to keep track of where my money goes. This is perhaps mostly founded in some sick interest or based on the idea to better manage my expenses. However, I always failed to successfully implement such a system. I vaguely remember that I used some app once, but only for a short time. I already found some trace of an old Org document where I keep track of my incomes and expenses from January to mid-March of 2018. The last entry there is from March 17th, and I don't remember what happened back then and why I stopped. The most likely reason is, that I had too much to do and forgot to use it.

I also remember that I looked at ledger once or twice and always wondered about the strange format and didn't go any further. Mostly because I didn't know, how to even start. Nevertheless, in early January (probably more or less exactly two months ago) I decided to start again with accounting. I chose to use hledger for this and so I made myself a warm cup of tea, leaned back and started to read the website and related blogs until I knew enough to get started.

And then I did it! I started to add all my current financial belongings and entered all the expenses starting on January 1st. This was now over two months ago and every day since then I at least checked if there were new expenses and added them, if necessary. Since I have a tendency to quickly forget such smaller tasks I created two to-do recurring to-do entries in my system: one that recurring every day to update my ledger file and another one recurring each Sunday re-check the balance of my different accounts (banks as well as cash).

So, did it help me in any way? I think so… Through the book-keeping, I get a clear overview of two things that I could not check easily before:

- How much money did I spend this month? Or: How much of my income is still left?

- How much money did I spend on what?

Especially the answer to the second question gave me a much clearer understanding of my financial actions. Not only where I should cut back but also gave me an understanding that certain expenses are not as high as I thought (relatively speaking). But also the first questions helps me a lot to understand how I could use my money, e.g. by putting it into a savings account.

Therefore, I'm really satisfied with hledger! Even if it doesn't save me money directly (which was never really my goal) it makes me understand my transactions better and therefore maybe save me some bucks indirectly. But also just the insight I get is worth the few minutes that I need every day for maintaining the system.

Day 19 of the #100DaysToOffload challenge.

DONE My Emacs Package of the Week: CRUX @100DaysToOffload emacs

CLOSED: [2022-03-01 Tue 20:05]

- State "DONE" from "TODO" [2022-03-01 Tue 20:05]

Some packages get mentioned over and over on different blogs and other Emacs related platforms. And other packages do not seem to get the same degree of attention (or at least I don't see it) although they deserve it. IMO one of these is CRUX, which is most fittingly described as "a Collection of Ridiculously Useful eXtensions for Emacs" by its created, Bozhidar Batsov. It does provide a large collection of helper functions that may assist you in all kinds of situations of your Emacs life. I think I stumbled upon the package when reading an Emacs configuration file of some other fanatic and added it to my configuration after some inspection. And I have not regretted it ever since!

As with the functionality of Emacs itself I also only use a very small subset of the commands that CRUX provides. There are currently only five functions that I actively use (or intend to do so) out of the 32 ones that are currently provided. So I won't go into full detail above all of them but only shortly cover the ones that sweeten my daily use.

crux-duplicate-current-line-or-regionwhich (as the name already says) duplicates the currently selected text or (if nothing is selected) the current line. I have it bound toC-c C-..crux-duplicate-and-comment-current-line-or-regionis quite similar, it also does the duplication but also comments the current line or selection/region out. This helps me quite a lot when developing and wanting to test something slightly different for the current line. I bound this one toC-c C-M-..crux-delete-file-and-bufferis another small helper that not only deletes the current file but also its buffer inside Emacs leaving no trace left. Because I know myself and already cursed a lot while trying to restore completely deleted files and folders (if I remember correctly the theme of this blog once became the victim of such an accident during initial development and before the first Git commit) I deliberately decided to not bind some key to this command. I rather executed it usingM-x.crux-rename-file-and-bufferon the other hand is a completely safe command that helps to rename a file and its associated buffer. Since I need to do this quite often I decided to bind it toC-c M-r.crux-top-join-lineis another small helper to join lines. This means that the line break and all whitespace (except one) is removed. To be honest, I don't use this yet but I have an urgent need for this functionality and will bind to some key quite soon.

The funny thing is that the functions defined in crux.el are neither that large (or use many helper functions themselves) nor very complex. It would be quite easy to implement most stuff on your own and it would certainly provide a great opportunity for learning a bit of Emacs Lisp (and Emacs). And I'm sure many have implemented at least some functions on their own. While I must admit that from time to time I'm tempted to do the same I am really grateful that this awesome package exists so that I can focus on other things.

If you have not heard, looked or tried CRUX for yourself then I can only recommend it and I encourage you to take a look and see what it can provide for you.

Day 18 of the #100DaysToOffload challenge.

DONE Using stow for managing my dotfiles @100DaysToOffload linux

CLOSED: [2022-02-26 Sat 08:54]

- State "DONE" from "TODO" [2022-02-26 Sat 08:54]

For more than four years, I've been using a self-written installation script for linking my dotfiles. I didn't search for any pre-made solution back then but instead just tried to automate my workflow of creating a symlink for every file in the repository individually. Since I was a big fan of the fish shell back then (and I'm still one) I decided to use it for the script.

The requirements were simple and clear: directories should be created if necessary and files need to get linked from the correct places. Since I didn't want to put all this information in one file (and a programming language is IMO not a good place to store data) I opted for two helpers files: dirs.list and links.list. The former just contained a list of directories (each on one line) that should get created, relative to the home directory. The links file contained two paths in each line, separated by a space. The first was the path to the actual file, relative to the dotfiles repo, and the second was the place where the symlink should get created, starting from the home directory.

The script then first created the directories and afterwards the links. This worked quite well. OK… It worked well for one use case: the initial creation of the links. For new links, the script also worked but threw an error for each link that already existed. Additionally, there was no way to delete the links. Finally, I also constantly had to fight with some issues.

After seeing and reading some people talk/write about GNU stow recently I decided to take a look at it and really liked the workflow. I found that stow is quite easy to use since the program only takes care of managing symlinks and nothing else. Thereby it solves all the shortcomings I had with my custom solutions: I can easily stow new configuration files and also remove all my symlinks.

About my structure: For each application, I have a folder and there the dotfiles are stored in the same directory structure as to where the symlinks will get placed. Additionally, I have three repositories where I keep my dotfiles (a general one for all kinds of configs and two others containing additional sensible information: one for work and one for personal). I clone the general dotfiles repo to ~/.dotfiles and have the relevant specialized repo inside there. This would mean that "stowing" every folder (aka package) manually would take too much time (and be very boring).

Therefore I created myself a small wrapper script (this time in bash since that's more universally available) that first iterates over the folders I want, executing stow on them. For this, I defined a variable holding a list of folder names that I can overwrite by passing an environment to the script. Afterwards, according to the hostname, either the additional work or private dotfiles are seeded using the same principle.

I just implemented this approach yesterday and didn't have much time to use it thoroughly but until now I'm satisfied.

Day 17 of the #100DaysToOffload challenge.

DONE Small changes to my website design @100DaysToOffload design web

CLOSED: [2022-02-23 Wed 16:29]

- State "DONE" from "TODO" [2022-02-23 Wed 16:29]

For some years until May 2020, I used WordPress for this site with the initial goal to focus more on writing instead of tweaking the templates. If you look in the archive of my blog you may see that this didn't work as intended. So nearly two years ago I decided to switch to a workflow that better suits my needs and set up this page using ox-hugo with hugo and a custom theme.

Back then I was quite satisfied with how it looked and I didn't even change much regarding the design during the last two years. But since I started writing more and visited my page more often I realized that some parts are starting to look a bit dated. Currently, I don't want to create a whole new design (that may be a task for 2023) but tweak it in a way that the page looks somewhat modern again.

The main parts that didn't feel right anymore were the large blocks with the solid purple background color (the navigation bar, the footer and the buttons) and I searched for a different solution there. In the end, I decided to cloth the footer in a modest dark gray and remove the background of the navigation bar completely. For the buttons, I went with a "bordered" design and gave them a nice shadow when hovering. Additionally, I took the sharpness out of the "page" by rounding the corners.

I'm still not completely convinced with the overall appearance since it feels very "dry". What really would help were more images. But that's for another update.

Day 16 of the #100DaysToOffload challenge.

DONE Another Update on Publishing my Emacs Configuration @100DaysToOffload gitlab cicd emacs orgmode

CLOSED: [2022-02-20 Sun 19:39]

- State "DONE" from "TODO" [2022-02-20 Sun 19:39]

A few weeks ago I wrote a post about how I experimented with publishing my Emacs configuration (which is written in Org) using org-publish. Kashual Modi, the creator of ox-hugo, replied to me and asked me if I thought about publishing the configuration using ox-hugo. I didn't! And it turned out that it was done by just adding three lines at the top of my Emacs configuration file as I wrote in a follow-up post a few days later. I was really astonished and didn't know what to do. Should I choose the org-publish or the ox-hugo path?

Well, after writing the blog post I didn't invest much time in thinking about what solution I should use and just got on with other stuff. Until I made some changes to my Emacs configuration last week and wanted to display these changes online. At this point, I wanted some CI/CD solution so that I don't need to take care of the building and publishing manually.

For some reason, it seemed a little bit easier for me to use the solution I wrote using org-publish instead of importing my dot-emacs repository into the GitLab pipeline (for the sake of completeness: I know that this is not only possible but also quite easy but decisions don't need to be rational all the time ;) ). So I decided to quickly set up my own pipeline for the dot-emac repository using a slightly adjusted version of the pipeline the builds and publishes my website.

The resulting GitLab CI pipeline configuration (.gitlab-ci.yml) is quite easy (well at least the script for the build stage, admittedly the before_script is not that obvious).

before_script:

- apk add --no-cache openssh

- eval $(ssh-agent -s)

- echo "$SSH_PRIVATE_KEY" | tr -d '\r' | ssh-add -

- mkdir ~/.ssh

- chmod 700 ~/.ssh

- echo "$SSH_KNOWN_HOSTS" | tr -d '\r' >> ~/.ssh/known_hosts

- chmod 644 ~/.ssh/known_hosts

I first define a before_script for setting up the SSH configuration for uploading the published files to my server.

build:

image: silex/emacs:27.2-alpine-ci

stage: build

script:

- emacs -Q --script publish/publish.el

- apk add --no-cache rsync

- rsync --archive --verbose --chown=gitlab-ci:www-data --delete --progress -e"ssh -p "$SSH_PORT"" public/ "$SSH_USER"@mmk2410.org:/var/www/config.mmk2410.org/

Using the Emacs Docker image from silex I run the publish Emacs Lisp script I wrote earlier, install rsync and upload the resulting website files in the public folder to my webserver.

As you can see I again defined four SSH related variables:

$SSH_PRIVATE_KEY: The private key for uploading to the server.$SSH_KNOWN_HOSTS: The server public keys for host authentication. These can be found by executingssh-keyscan [-p $MY_PORT] $MY_DOMAIN(from a trusted environment, if possible from the server itself).$SSH_PORT: The port at which the SSH server on my server listens$SSH_USER: The user as which the GitLab CI runner should upload the files.

After a few stupid mistakes regarding the place of the publish.el script, the paths in the script and the public/ folder I got it running quite fast and now always have my config.mmk2410.org page up-to-date.

Regarding ox-hugo: As long as the scripts I wrote for using org-publish work I will probably continue using this solution. But if it fails someday in the future and/or I would need to make some larger adjustments I will more likely switch to ox-hugo.

Day 15 of the #100DaysToOffload challenge.

DONE Mirroring my Gitea Repos with Git Hooks, again @100DaysToOffload git selfhosting

CLOSED: [2022-02-17 Thu 18:37]

- State "DONE" from "TODO" [2022-02-17 Thu 18:37]

My Journey

In August 2020 I started hosting all my Git repositories on my own Gitea instance after previously using it for my private projects for some time. Since a self-hosted Gitea instance is not very discoverable I decided to keep showing my repos on GitLab and GitHub. At this point, all my relevant GitLab (which I used as a main hosting platform before) projects already were mirrored to GitHub directly after each commit. So I decided to keep this part and only search for a solution for bringing the data from Gitea to GitLab. Since Gitea did not have anything built-in I searched a bit and finally found some posts showing a way how to achieve this with Git hooks. I also wrote a blog post about my setup back then.

Last year Gitea 1.15 came out and included support for mirroring repositories and I decided to switch to that solution since it is much cleaner than using a~15 line Bash script for each repository. There's just one catch that didn't bother me until recently. Gitea currently doesn't have a feature to mirror after each push but uses a given interval (by default eight hours). For most projects, this is enough and for some that are a little bit more active, I reduced it to four hours.

My Problem

A little bit over a week ago this became a little bit problematic since I'm using GitLab Pipelines for building and publishing my blog post. So after pushing to my Gitea instance I would need to wait for up to four or eight hours until the build finally starts. Of course, that's not what I did.

I manually open the settings page for my Gitea repo and pushed the "Synchronize Now" button.

This is clearly not a permanent solution and so I already thought about going back to my Git hook solution some days ago. And today I did it! At least for three repos that are either active and/or have a GitLab Pipeline configuration for publishing.

The requirements are a little bit different this time: when switching from Git hooks to the built-in feature I also moved all GitHub mirror configuration from GitLab to Gitea since it doesn't make any sense to keep this configuration separated (and it's also no fun to configure this in the settings menus for every new project). So it is necessary that my new Git post-receive script pushes to both: GitLab and GitHub.

My Solution

I initially started using my previous script and adjusted it a bit by using a for loop iterating over a space-separated string of repository URL which worked quite well. But shortly after starting to write this blog post, I had another idea.

Is it really necessary to put an SSH private key in the Git hook script in each repository?

Well, the answer is no! It seems that I learned at least a bit during the last time I did this and so I connect to my server using SSH. Since I'm not hosting Gitea using Docker but using the binary it needs to have some "real" user running it. After a cat /etc/passwd I found out that it is not even a system user but a normal one with a normal home directory at /home/git where also all the repositories are stored. From there on it was quite clear: I switched to the user and created a set of SSH keys.

sudo -u git -i

ssh-keygen -t ed25519I copied the public key, added it to my GitLab and GitHub profiles and adjusted my post-receive Git Hook scripts to just push and not store a private SSH key.

#!/usr/bin/env bash

set -euo pipefail

downstream_repos="git@gitlab.com:mmk2410/dotfiles git@github.com:mmk2410/dotfiles"

for repo in $downstream_repos

do

git push --mirror --force "$repo"

done

The result is just a script with 10 lines that simply iterates over a list of repository URLs and force-mirror-pushes to each one of them. I don't need to care about any authentication in the scripts since it is executed using the git user and thereby authenticates to GitLab and GitHub using the previously generated SSH key.

It's that easy that I'm really wondering why I didn't have this idea the last time.

And some final warnings

A little note to everyone who wants to try this at home. If you're hosting a Gitea instance that multiple people use then you should make sure that only you can add Git hooks. Since everyone who can define Git hooks can run every command on your system. There is no additional security layer. That's also the reason why Git hooks are by default disabled in Gitea. Using the correct configuration option you can change this.

Another note on performance, if you care for this: your git push executions will take longer since the post-receive hook on the server is run during the execution (at the end, of course, but still) and it may take a little while. I also don't know yet what will happen if one of the remote repositories (or their host) has a temporary outage. Be warned that your push command will probably hang if this happens.

Day 14 of the #100DaysToOffload challenge.

DONE Why I failed using Org-mode for tasks @100DaysToOffload orgmode emacs pim

CLOSED: [2022-02-14 Mon 14:58]

- State "DONE" from "TODO" [2022-02-14 Mon 14:58]

I started using Emacs back in 2016 and shortly after that I discovered Org-mode a little while after (I don't know the exact date but I have tasks in my archive going back to 2018 and I know that I used it some time without the archiving functionality). For some time my bio on Fosstodon even contained the line „couldn't survive without Org-mode“ and yet, since two months I haven't used it.

Well, this is not entirely true. I still use Org-mode with its Agenda for tasks at work, I just stopped using it for my out-of-work things. OK… I need to make another slight adjustment to this statement. I didn't stop two months before, it was much earlier. Though I couldn't name an exact date or even a month. It was a gradual process.

Finding the Perfect Tool

„But why?” you may ask.

There are two answers to be given here. On the one hand why I stopped using it and on the other hand why I failed using it in the first place. When I started using Org-mode it had an interesting effect on me: it felt right. I could adjusted it to my needs, I really used it, I worked with the tasks and I could trust it. Storing a note in there was really reliable for me. I could count on the system that it would help me to deal with it and I was sure that it would not get forgotten in there.

Error: Task Overflow

After some time (I think until 2019) this still worked perfectly but I didn't use it anymore for all my tasks. To be precise it became quite hard to deal with it. I worked with my tasks by scheduling every single one and at one point there were way too many tasks each day. I didn't re-schedule them to a later date, I just let them stay. At the end the list was far too long to deal with it anymore and so my usage slowly decreased. And a to-do system that is not used is not a good to-do system.

A while later (I think it was 2020) I decided to reform the progress to make it usable again. My main decision was to not schedule any tasks at all but using Org Super Agenda for grouping the tasks and make them easily discoverable. Well, this worked a little bit… I mean, it was not a total failure but it quickly became only a task management tool for larger projects and habits. Only a few smaller tasks had the “opportunity” to get added there.

Fleeing from the Beast

Especially during the last quarter of 2021 I more and more recognized this. It went that far that I decided in early December that I cannot use Org-mode for To-dos anymore. At least not with this configuration and so I made myself a small plan to change this:

- Use a completely different tool for a limited time (for about one year)

- Read up on task and to-do management

- Recognize the problems with the old Org-mode configuration

- Recognize the requirements for a task management tool

- Configure Org-mode to fulfill these requirements

- Switch back to Org-mode (after about one year)

Working from Exil

I started immediately searching a tool that works flawlessly. I tried the tasks features of CalDav with my Nextcloud instance (and the Tasks app) as well with my email hosting provider mailbox.org. I could not work with it. It was much too complicated and UX-unfriendly for me to use this as a to-do-system. And so I finally decided to go with a tool that apparently works for millions: Todoist. Although I'm really not a friend of such centralized more or less privacy respecting companies but after using it for two months now I have to admit that it really works for me. It may be completely subjective but it seems to me as I would get more things done than ever before. At least I add all the to-dos I need to deal with and I always (OK, sometimes I forget to check of already done tasks in the evening) finish my day with all tasks either done or mindfully rescheduled.

In the meantime I already started with the second step. I read a few articles online and bought the “Gettings Things Done” book from David Allen. Although I have not even finished the first chapter I can already get some value from it in how I create and manage my to-dos.

Diagnosing the Failure

Regarding the third step: why did I fail to use it twice? Any I mean fail and not stopped since it was me who used and configured the system in a way that makes it unusable.

Although I still don't have much experience I think that the main reason was wrong task management. Having a gigantic list of tasks in front of you is not motivating and doesn't help to actually work on them. Having many tasks (perhaps even the larger part) annotated with a message that the task was already scheduled some months ago and still occurs every day is also no motivation boost. And—regarding my second setup—not scheduling tasks but needing search through them every time I want to have something done is also not helpful at all. The nice and easy tasks get done then but the more difficult ones get lost in endless lists of to-dos.

I'm still just at the beginning of the journey of learning more about task management and setting up my Org-mode in a way that works. Further articles about this will surely follow!

Day 13 of the #100DaysToOffload challenge.

DONE Using Emacs tab-bar-mode @100DaysToOffload emacs

CLOSED: [2022-02-11 Fri 21:04]

- State "DONE" from "TODO" [2022-02-11 Fri 23:04]

Everyone knows tabs. From your favorite web browser, your file manager, your terminal emulator and perhaps many other programs. And if you know Emacs or heard anything about it you perhaps wouldn't be surprised if I told you the it has not one, but two tab modes. There is tab-line-mode which is equivalent to what we know from other editors or the browser: one "thing", file, windows, buffer, whatever per tab.

But there is also tab-bar-mode which works a little bit different: instead of having one file per tab you have one window configuration per tab. Let's say we're working on three different projects at a time. Then we could have one tab (let's give it the name dotfiles) which has two windows (e.g. my zsh and fish configurations), split equally horizontally. Our next tab is named API and contains three windows, two files and an eshell buffer (e.g. one horizonal split and in the left half an additional vertical split). And in the third tab there are our files corresponding to the frontend project. Let's say there is just one window taking the complete space. With tab-bar-mode it is now possible to switch between these tabs, making adjustments to the window layout going to another tab and still having the same configuration for this tab. For code projects I have exactly this workflow of using the tabs as workspaces.

But I also use tab-bar-mode for some more general stuff. Normally I have one Emacs frame open where I actively work with (be it coding or writing or something else where my main attention goes to). And one frame (either on a second monitor, on another virtual desktop or just in the background) where I keep stuff like mail or agenda. To get a good overview and quickly switching between these “meta” buffers I have an own tab for each of them:

- Mail with mu4e

- Agenda with Org

- Journal with org-journal

- Random org file with relevant notes, e.g. my

projects.orgfile - IRC with ERC

- RSS with Elfeed

Although I don't necessarily have all of them open all the time.

The problem is just that it is quite cumbersome to initially open them. I need to create a new tab with C-x t 2 and the run the required command, e.g. C-c m for starting mu4e. With about six open tabs switching is also not that efficient. I could tab around using C-TAB or C-SHIFT-TAB or search with C-x t RET (this presents a search field with completion for the open tabs).

What really would be handy where some keybindings for switching to a certain tab that also creates and runs the necessary commands if the tab doesn't exist yet.

This itched me already some months ago and initially I wrote a large function which would open all the tabs and start the clients or open buffers. Additionally I had a small command for each of them that would switch to the correct tab and bound them to a keybinding. While it was working somehow at some point I constantly started commenting out parts of the large initial run function because I didn't want to run necessarily everything if I only need a mail client and an agenda.

Yesterday I took some time to find a better solution for this problem and came up with a few handy functions.

(defun mmk2410/tab-bar-switch-or-create (name func)

(if (mmk2410/tab-bar-tab-exists name)

(tab-bar-switch-to-tab name)

(mmk2410/tab-bar-new-tab name func)))

In working through the problem I though that I essentially need some more or less abstract function that checks whether a tab with a given name already exists and, if not, creates one using a given function. mmk2410/tab-bar-switch-or-create does exactly this.

(defun mmk2410/tab-bar-tab-exists (name)

(member name

(mapcar #'(lambda (tab) (alist-get 'name tab))

(tab-bar-tabs))))

After browsing the source code of tab-bar a bit and reading some Emacs Lisp pages I came up with this little helper for determining if a tab with a given name already exists. It uses the function (tab-bar-tabs) which returns all exiting tabs as a list of attribute lists over which I iterate (mapcar) and extracted the tab name (alist-get 'name tab). The member function now tells me if the given name is a member of the list of all names of existing tabs.

(defun mmk2410/tab-bar-new-tab (name func)

(when (eq nil tab-bar-mode)

(tab-bar-mode))

(tab-bar-new-tab)

(tab-bar-rename-tab name)

(funcall func))

The tab creation part was a bit easier. I wrote a this simple function which enables tab-bar-mode in case it is not already running, creates a new tab with the given name and runs the given function for setting the new tab up.

What's left to do? Writing the specific functions for the different programs or files. Essentially all are interactive (this means that I could also execute them via M-x) and call mmk2410/tab-bar-switch-or-create with a tab name and either a function name, e.g. elfeed, or a lambda function with some instructions. The following blocks show the functions I have currently configured.

(defun mmk2410/tab-bar-run-elfeed ()

(interactive)

(mmk2410/tab-bar-switch-or-create "RSS" #'elfeed))

(defun mmk2410/tab-bar-run-mail ()

(interactive)

(mmk2410/tab-bar-switch-or-create

"Mail"

#'(lambda ()

(mu4e-context-switch :name "Private") ;; If not set then mu4e will ask for it.

(mu4e))))

(defun mmk2410/tab-bar-run-irc ()

(interactive)

(mmk2410/tab-bar-switch-or-create

"IRC"

#'(lambda ()

(mmk2410/erc-connect)

(sit-for 1) ;; ERC connect takes a while to load and doesn't switch to a buffer itself.

(switch-to-buffer "Libera.Chat"))))

(defun mmk2410/tab-bar-run-agenda ()

(interactive)

(mmk2410/tab-bar-switch-or-create

"Agenda"

#'(lambda ()

(org-agenda nil "a")))) ;; 'a' is the key of the agenda configuration I currently use.

(defun mmk2410/tab-bar-run-journal ()

(interactive)

(mmk2410/tab-bar-switch-or-create

"Journal"

#'org-journal-open-current-journal-file))

(defun mmk2410/tab-bar-run-projects ()

(interactive)

(mmk2410/tab-bar-switch-or-create

"Projects"

#'(lambda ()

(find-file "~/org/projects.org"))))

I also wrote, that I want to have these functions available with some keybinding. A few days ago I first dealt with hydra and I have to say, that I really like it! Therefore I chose to define a hydra configuration for these functions that are accessible with C-c f.

(defhydra mmk2410/tab-bar (:color teal)

"My tab-bar helpers"

("a" mmk2410/tab-bar-run-agenda "Agenda")

("e" mmk2410/tab-bar-run-elfeed "RSS (Elfeed)")

("i" mmk2410/tab-bar-run-irc "IRC (erc)")

("j" mmk2410/tab-bar-run-journal "Journal")

("m" mmk2410/tab-bar-run-mail "Mail")

("p" mmk2410/tab-bar-run-projects "Projects"))

(global-set-key (kbd "C-c f") 'mmk2410/tab-bar/body)After using it a little bit today I'm quite satisfied. There are just a few things I would like to change, e.g. I want to have the journal and agenda in the same tab. But I think this will be easy to achieve. Another thing that I may want to add is a possibility to replace or use the current tab instead of creating a new one. But I'm currently not sure how I could do this nicely.

As you may or may not already recognized: I don't have much experience in writing Emacs Lisp code and there are certainly things that could be improved. If you have some suggestions feel write to write me a mail!

Day 12 of the #100DaysToOffload challenge.

Publishing my Website using GitLab CI Pipelines @100DaysToOffload hugo emacs orgmode

- State "DONE" from "TODO" [2022-02-08 Tue 22:05]

I wrote some posts recently, like “Update on Publishing my Emacs Configuration”, where I mention that my current workflow of deploying changes to my website can be improved. Well, I could always improve it, but this is one of the more urgent things.

The Status Quo

Currently after I writing some blog post or changing a page I export it by calling the relevant ox-hugo exporter using the Org export dispatcher. This places the exported files in the content directory. When I'm ready to publish I run my “trusty” script which removes the current public folder (the place where hugo dumps all its files), runs hugo to generate all files from scratch and uploads it with rsync.

There is just on problem with this approach. I'm often using a different environment than the last time to edit the site. Sometimes I use another laptop, sometimes another operating systems and sometimes even both. I don't want to switch them just for writing a blog post but I want to use what's currently running. For publishing the source code, working with multiple environments and not at last to have some version control keep my website in a Git repository. If you ever used Git with more than one machine you know that forgetting to pull before starting to work on something (or in even worse situations after making a commit) happens almost on a regular basis. While its no fun to deal with this, at least you realize it. Git will scream at you until you get it right.

But there's another thing that doesn't scream. That doesn't say one word: Blog posts and updated sites that are not exported don't scream. They are that quiet that I only notice it by chance if they are missing on the website after uploading my page. And belief me: this did not happen only once!

“But why don't you just include a script to export everything before publishing?”

Because it takes horribly long. I have over 100 blog posts and 366 posts from my Project 365 in 2015. So some other solution is obviously needed!

The new workflow

This “other solution” is called continuous deployment. Let me outline shortly what I want. While I host my Git repositories on my Gitea instance and only mirror to GitHub and GitLab I currently have no own continuous integration / pipeline runner (I tried Woodpecker but don't want to run it on my main server and I don't need it that much that it is worth renting another VPS). So I decided to use GitLab Pipelines for this. The pipeline will run on every push and thereby build and deploy the website.

The Export Script

For the build step I wrote a short Emacs Lisp script that I'll discuss in parts.

(package-initialize)

(add-to-list 'package-archives '("nongnu" . "https://elpa.nongnu.org/nongnu/") t)

(add-to-list 'package-archives '("melpa" . "https://melpa.org/packages/") t)

(setq-default load-prefer-newer t)

(setq-default package-enable-at-startup nil)

(package-refresh-contents)

(package-install 'use-package)

(setq package-user-dir (expand-file-name "./.packages"))

(add-to-list 'load-path package-user-dir)

(require 'use-package)

(setq use-package-always-ensure t)The first part (well, nearly half the script) installs and loads the necessary packages. I added the Non-GNU ELPA and MELPA as package archives since I most likely need packages from them in the future, although currently only need ox-hugo which is available on MELPA. I install and load the packages using use-package since in my opinion this provides a clean structure.

(use-package org

:pin gnu

:config

(setq org-todo-keywords '((sequence

"TODO(t!)" "NEXT(n!)" "STARTED(a!)" "WAIT(w@/!)" "SOMEDAY(s)"

"|" "DONE(d!)" "CANCELLED(c@/!)"))))

Of course I load Org and also define my org-todo-keywords list. ox-hugo will respect this and only export posts that don't have a keyword or have a keyword from the done part (the entries after the | (pipe)). To be honest I'm currently not using this feature for published blog posts since posts with a to-do-state would be visible in the public repos anyway. But I wanted to write the script as general as possible.

(use-package ox-hugo

:after org)

For using ox-hugo I'm using ox-hugo, duh…

(defun mmk2410/export (file)

(save-excursion

(find-file file)

(org-hugo-export-wim-to-md t)))

Then I define a small function that opens a given file and calls the ox-hugo exporter which exports the complete content (all posts/pages) of the current file.

(mapcar (lambda (file) (mmk2410/export file))

(directory-files (expand-file-name "./content-org/") t "\\.org$"))

And finally I run this function for every file in my content-org directory. Currently there are only three but who knows what will happen in the future.

The Pipeline Configuration

For the upload SSH configuration I followed the corresponding GitLab documentation.

I started by creating a new user on my server and—using that user—a new SSH ed25519 key pair. Then I added the public key to the ~.ssh/authorized_hosts file and granted the user rights to write to the root directory of my website. Afterwards I defined some necessary CI variables in GitLab for connecting with this user.

$SSH_PRIVATE_KEY: The private key for uploading to the server.$SSH_KNOWN_HOSTS: The servers public keys for host authentication. These can be found by executingssh-keyscan [-p $MY_PORT] $MY_DOMAIN(from a trusted environment, if possible from the server itself).$SSH_PORT: The port at which the SSH server on my server listens$SSH_USER: The user as which the GitLab CI runner should upload the files.

Using these variables I can now write my .gitlab-ci.yml pipeline configuration.

variables:

GIT_SUBMODULE_STRATEGY: recursiveSince I keep my own hugo theme in an own repository and import it as a Git submodule I can ask GitLab to by nice and clone it for me.

before_script:

- apk add --no-cache openssh

- eval $(ssh-agent -s)

- echo "$SSH_PRIVATE_KEY" | tr -d '\r' | ssh-add -

- mkdir ~/.ssh

- chmod 700 ~/.ssh

- echo "$SSH_KNOWN_HOSTS" | tr -d '\r' >> ~/.ssh/known_hosts

- chmod 644 ~/.ssh/known_hosts

The script then continues with a lot of SSH voodoo. After installing OpenSSH and starting the ssh-agent I add the private key and the public server key as a known host.

build:

image: silex/emacs:27.2-alpine-ci

stage: build

script:

- emacs -Q --script .build/ox-hugo-build.el

- apk add --no-cache hugo rsync

- hugo

- rsync --archive --verbose --chown=gitlab-ci:www-data --delete --progress -e"ssh -p "$SSH_PORT"" public/ "$SSH_USER"@mmk2410.org:/var/www/mmk2410.org/

Then it gets a little bit more obvious. Using the Emacs 27.2 Alpine Image by silex I already get the necessary Emacs installation and just need to run the Emacs Lisp script from above with it. Then I install the necessary dependencies for the next steps. First I build the page with hugo and finally upload the resulting public/ directory to my server using rsync. Thereby I define the ssh command with -e since there seems to be no other way to set a SSH port. Using the --delete option I also remove posts and files that I removed from the repo or that are no longer build.

artifacts:

paths:

- public

As a small gimmick I also publish the public directory of my website as a build artifact. There is no reason at all for this but I first started only building the blog a few days ago and didn't implement the deploy part until today. Maybe it will come in handy some day or I delete that part sooner or later.

You can find the complete files in my repository.

Next Steps

While Gitea currently has a mirror feature it runs on a timer and not after each push. This means that I would either wait quite some time for Gitea to push the changes to GitLab or trigger the sync manually using the web frontend. Currently I'm doing the second one but this is not a good solution. I currently think about going back to my own workflow by declaring a server-side Git post-receive hook for mirroring.

Another step is improving the gitlab-ci.yml file. Adding rules to only run the pipeline on pushes to the main branch and splitting the one step into a build and a deploy step are things that I want to do quite soon.

Finally I also need to decide whether to continue publishing my Emacs config using Org publish and the config.mmk2410.org subdomain or whether I want to use ox-hugo for exporting to the /config path. In the later case I would need to further adjust the pipeline configuration and otherwise I would need to write an own pipeline.

As always, I'll keep you posted!

Day 11 of the #100DaysToOffload challenge.

DONE My Emacs package of the week: org-appear @100DaysToOffload emacs orgmode

CLOSED: [2022-02-05 Sat 08:37]

- State "DONE" from "TODO" [2022-02-05 Sat 08:37]

It may be a little boring for some, but the second post in my “My Emacs package of the week” series is again about an Org-mode package (well, if you follow my blog you shoudn't be surprised). I use org mode a lot (though I used to use it more (a blog post about this is coming soonish)) and so from time to time I notice some things that I would like to be a little bit different or I stumble upon packages (either because I see someone else using it, by browsing some social networks or by reading my RSS feed, e.g. Sacha Chua weekly Emacs news; This one I found in the Emacs configuration of David Wilson).

Next to functionality I also like to have a somewhat comfortable editing environment. Therefore I'm trying to use variable-pitch-mode since a few months (for those who don't know what this is: it changes the font to something that is not fixed width, in my case currently Open Sans) and also the org-superstar-mode to display nice UTF-8 bullets instead of just some raw stars *. Using ▼ for collapsed sections instead of the default ... also makes the view a little bit nicer.

Additionally I took a bit of configuration from the System Crafters' Emacs from Scratch config for narrowing the text width so that I can also edit my text with Emacs being maximized or displayed full screen.

(defun efs/org-mode-visual-fill ()

(setq visual-fill-column-width 100

visual-fill-column-center-text t)

(visual-fill-column-mode 1))

(use-package visual-fill-column

:hook (org-mode . efs/org-mode-visual-fill))

(add-hook 'org-mode-hook (lambda ()

(display-line-numbers-mode -1)

(variable-pitch-mode)))

Finally I'm hiding the all emphasis markers such as *, /, =:

(setq org-hide-emphasis-markers t)

Now what I see looks quite clean and makes writing at bit nicer (or at least I think so…). For e.g. writing blog posts I use Emacs in full screen and additionally narrow the buffer using org-narrow-to-subtree which makes the whole process quite distraction free.

Although this may sound very nice, there is some part about this that regularly drives me nuts! Can you spot it?

It is the hidden emphasis markers! While it really looks clean when they are hidden It makes emphasised content hard to edit. Especially if I need to change something at the beginning or end or even delete the markers. This is a constant play of "Well, lets try starting to delete here… Hmm, no didn't work… What about here?… Still not… Here? Aaah, finally!!!". As you can image there are better things in life. awth13 apparently thought the same and created a package to solve this annoyance: org-appear.

What org-appear does is showing the emphasis marker only when needed. This means when my cursor is at the emphasised content. Therefore the problem of finding the markers or editing the content at the beginning or end of the emphasised section becomes easy again.

Therefore I decided to install the package and enable it for all org-mode buffers. The package is available on Melpa.

(use-package org-appear

:after org

:hook (org-mode . org-appear-mode))If I open a new Org file now I see it (more or less) nicely formatted but I'm still able to edit my document effortlessly without any annoyances (or at least without any annoying hidden or shown emphasis markers).

Though org-apper offers some more options than displaying the emphasis markers on “hover”. It is also possible to toggle the full display of links (URL + description with the brackets instead of just the description) by setting org-appear-autolinks to t. Other toggling possibilities include keywords (as defined in org-hidden-keywords), entities and submarkes (i.e. subscripts and superscripts) toggling.

The customization options don't stop there. It is also possible to customize a delay for the markers to appear after the cursor entered the emphasised part by defining org-appear-deplay and/or to only toggle in certain circumstances e.g. after a change was made. It is even possible to take the complete control over the “toggling” by setting org-appear-trigger to manual and using the org-appear-manual-start and org-appear-manual-stop functions (perhaps by binding them to some key(s)).

For me personally the default settings are perfect. I don't want to configure a delay since this may be too slow in certain situations and I prefer the default behaviour of org-insert-link for setting or updating links. All in all the package is a very good addition to my workflow and I can only recommend it to everyone in need for a similar solution.

Day 10 of the #100DaysToOffload challenge.

DONE Update on Publishing my Emacs Configuration @100DaysToOffload emacs orgmode hugo web

CLOSED: [2022-02-02 Wed 20:42]

- State "DONE" from "TODO" [2022-02-02 Wed 20:42]

After posting my last blog article about publishing my Emacs configuration on Fosstodon, Kaushal Modi (the maintainer of ox-hugo the org mode to hugo exporter that I use for my Blog) wrote me and brought the idea up to publish my Emacs configuration using ox-hugo and hugo. I didn't even think about that and so the same evening I tried it. If you've read my previous blog post you know the amount of code and work that is necessary to get org-publish running, with ox-hugo I need to add the following three lines on top of my config.org file.

#+HUGO_SECTION: config

#+HUGO_BASE_DIR: ~/projects/mmk2410.org/

#+EXPORT_FILE_NAME: index

That's all, you may wonder? Well… I also need to export the file. For me these are the keys: C-e H H (If you're normal that is: CTRL+e followed by H and again followed by H). That's it. Crazy, isn't it! Running hugo serve and navigating to http://localhost:1313/config (yes, you currently (as of 2022-02-02) find this version of the config at mmk2410.org/config, but don't share or save this link as I may or may not remove the page soon, use config.mmk2410.org for this) showed my complete configuration nearly the same as by using org-publish. The only difference is that the slight theme adjustments I made for the org-publish configuration are not there (duh…) and there is no table of contents. But the TOC is another problem anyway since it is in my opinion too large to

display directly on the page, as I already wrote in the other post.

The other "next step" I mentioned there was to automatically run the org-publish configuration and publish the new config page after pushing a change. This is also something I need to do with my blog. I currently write blog posts from two different machines and three different operating system installations and remembering to run a git pull via Magit before starting to write is already hard enough for me. Since my hugo publish script only runs hugo to build the site but not Emacs and ox-hugo in advance to export the latest state of the posts I uploaded an incomplete website more than once last month. So either I adjust the script to run some Emacs snippet for running ox-hugo (and including the config export would be easy there) or I go the “DevOps” way and configure a pipeline that runs on every commit, exports the articles, builds the page and publishes it somehow. So the automating task is also something that I need to do anyway.

This puts me in a difficult position: what should I do? On the one hand org-publish approach is very "emacsy" and therefore fits the project of publishing a Emacs configuration really well, on the other hand it is by far easier to use ox-hugo for this. I'm still not sure what to do but I want to decide quite soon since the current workflow of manually publishing two websites slowly starts to annoy me. Especially since I do edits on both quite often.

I'll keep you posted!

Day 9 of the #100DaysToOffload challenge.

DONE Publishing My Emacs Configuration @100DaysToOffload web emacs orgmode

CLOSED: [2022-01-30 Sun 20:19]

- State "DONE" from "TODO" [2022-01-30 Sun 20:19]

Introduction

As you may know, I'm using Emacs for various task and I have a configuration for doing so. I think that documentation is an important part of a configuration, especially if it is not something I read or work with every day and I want to read up on certain things and decisions after a long time. That's why I chose to write my Emacs configuration using literate programming by using Org Babel. This means that I have one large Org-mode file (currently 2265 lines) with headings, texts and Emacs Lisp source code blocks which are my actual configuration and which will get read and evaluated on Emacs startup. There are multiple ways for achiving this and I adopted the approach taken by Karl Voit.

Writing such a configuration is not done on the first day of using Emacs and so during the past years I have probably learned most things I know about Emacs by reading config files of other users and I'm really grateful for all the people who made their responding Git repository public.